In 2012, breaking RSA-2048 on a quantum computer would have required approximately one billion physical qubits. In May 2025, Craig Gidney at Google published a revised estimate: fewer than one million. The machine does not exist yet. The maths keeps improving as if it does.

That compression (roughly 1,000-fold in thirteen years) is the number that should be in every board-level quantum risk discussion that is not already happening. It is not a vendor claim or a think-tank projection. It comes from peer-reviewed papers in the public domain, built on the same surface code error correction framework that security bodies in the US, UK, Germany and France have used as the technical foundation for their guidance. As those estimates have fallen, the guidance has tightened. The timeline between "this is theoretically possible" and "you needed to have started migrating already" is closing.

This article tracks both curves: the algorithmic resource estimates and the institutional policy response. The two do not move in lockstep, and the gap between them is where organisations get into trouble. I have structured the piece chronologically because the sequence matters. The policy decisions follow the technical results, typically with a lag of two to three years. Understanding that lag is the practical lesson.

Before diving into the history, the framing that makes the urgency precise: the Mosca Theorem. Formulated by Dr Michele Mosca at the University of Waterloo and the Global Risk Institute, it states that if the remaining lifetime of data requiring protection, plus the time needed to complete migration, exceeds the time before a cryptographically relevant quantum computer (CRQC) arrives, the data is at risk. The theorem does not require knowing Q-Day precisely. It requires only that the migration timeline and data sensitivity window be longer than the residual time before the threat arrives. The Global Risk Institute's 2025 quantum threat timeline report (the sixth annual edition, led by Mosca) places the median expert estimate for a CRQC at 2029-2032, with a 34% probability by 2030. At that range, the theorem is not theoretical for most large organisations. It is operational.

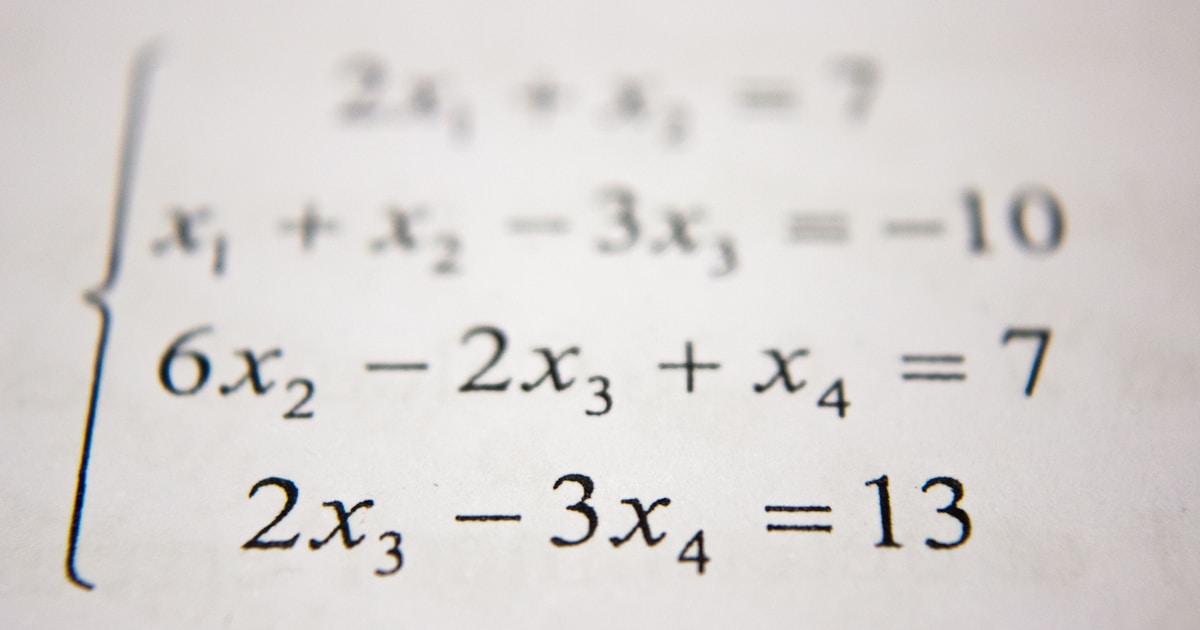

Three anchor points, thirteen years, three orders of magnitude. Physical qubit estimates incorporate surface code error correction overhead at a physical gate error rate of approximately 10-3. The Beauregard (2003) point is a logical qubit estimate and is not directly comparable. Projections are log-linear extrapolations and should not be treated as engineering specifications.

Sources: Beauregard (2003): arXiv:quant-ph/0205095 | Fowler et al. (2012): Nature Physics | Gidney and Ekerå (2019/2021): Quantum journal | Gidney (2025): arXiv:2505.15917

What Shor's Algorithm Actually Requires

Peter Shor's 1994 paper, arXiv:quant-ph/9508027, later published in SIAM Journal on Computing in 1997, demonstrated that integer factorisation is solvable in polynomial time on a quantum computer. That is the theoretical foundation for every risk estimate that follows. Shor showed that the mathematical structure RSA depends on, the difficulty of factoring the product of two large primes, is not actually difficult for a quantum machine running his algorithm. On a conventional computer, factoring a 2048-bit number would take longer than the age of the universe. On a sufficiently powerful quantum computer running Shor's algorithm, hours.

The original paper did not contain a qubit count. It was a proof of concept: the algorithm works in principle. The engineering question of how many physical components a real machine would actually need came later. The first serious answer arrived in 2002, when Stephane Beauregard published a circuit-level analysis at arXiv:quant-ph/0205095. Beauregard's result: 2n+3 logical qubits for an n-bit integer. For RSA-2048, that works out to 4,099 logical qubits. A small number, superficially. The problem is what "logical" means.

This is the distinction that most risk discussions skip, and skipping it leads to badly calibrated threat assessments. Logical qubits assume perfect, error-free operation. Quantum hardware does not work that way. Physical qubits, the actual components in a real machine, make errors at rates that are orders of magnitude too high to run Shor's algorithm directly. The solution is quantum error correction: encoding one logical qubit across many physical qubits so that errors can be detected and corrected without disturbing the computation. The overhead depends on the error correction scheme and the physical error rate. At realistic noise levels (a physical gate error rate of roughly 10^-3, which is the standard assumption in surface code analysis) the ratio of physical to logical qubits runs from approximately 1,000:1 to 10,000:1. [INFERRED: standard surface code literature; Fowler et al. (2012), Nature Physics is the primary reference for the physical qubit calculation that follows.]

The first rigorous physical qubit estimate incorporating surface code error correction came from Fowler, Stephens and Grover (2012). Their figure: approximately one billion physical qubits to factor RSA-2048. That number became the canonical government baseline. When NIST opened its post-quantum standardisation programme in 2016, it was the Fowler et al. figure that underpinned the technical case for urgency. When intelligence agencies started updating their cryptographic guidance in 2015, it was this estimate they had in mind. One billion physical qubits is a machine that does not remotely resemble anything in existence. The largest quantum processors in 2012 had a handful of qubits with much worse error rates than the analysis assumed. The threat felt distant. That perception aged poorly.

A brief note on the terminology I will use throughout. A CRQC (cryptographically relevant quantum computer) means specifically a device capable of running Shor's algorithm on RSA-2048 or similar at production scale. It is the term of art that distinguishes a threat-capable machine from the research-scale quantum computers that exist today. When I write "Q-Day," I mean the point at which a CRQC becomes operational in the hands of an adversary.

2015 to 2016: The Policy Window Opens

The institutional response to Shor's theorem did not arrive until 2015, twenty-one years after the paper was published. That lag is not unusual in policy. The conversion of theoretical risk into institutional action requires a combination of evidence, mandate and political will that rarely moves quickly. What happened in 2015 was that at least one major intelligence body decided the evidence had crossed a threshold.

August 2015: NSA replaces Suite B with the CNSA Suite

In August 2015, the US National Security Agency issued an advisory explicitly citing quantum computing as the driver for replacing its Commercial National Security Algorithm Suite B. The document was blunt about the direction of travel: organisations that had not yet migrated to Elliptic Curve Cryptography were advised not to bother, because the post-quantum transition would supersede that migration anyway. The minimum RSA key size for new systems was raised to 3,072 bits. The CNSA Suite FAQ, published January 2016, confirmed that quantum computing had become the driver for a wholesale re-evaluation of US national security cryptographic standards.

This was the first explicit public acknowledgement from a major intelligence body that quantum computing posed a near-term strategic cryptographic threat. It is worth noting what the advisory did not contain: no Q-Day estimate, no migration deadline, no specification of which post-quantum algorithms to use. The NSA knew the direction. It did not yet have a timetable or replacement candidates.

April 2016: NIST IR 8105

In April 2016, NIST published NIST Internal Report 8105, formally launching what would become the most consequential cryptographic standardisation exercise since DES. The document stated: "If large-scale quantum computers are ever built, they will be able to break many of the public-key cryptosystems currently in use." NIST used the conditional. That conditional is significant. In 2016, the Fowler et al. billion-qubit figure was still the reference point, and billion-qubit machines were speculative enough that "if" was a defensible framing. The report nonetheless recommended that organisations begin considering post-quantum alternatives and solicited candidate algorithms from the global cryptographic research community.

No qubit estimate. No Q-Day. A competition with no stated end date.

November 2016: NCSC's first quantum paper

The UK's National Cyber Security Centre entered the record in November 2016 with a position paper on quantum security technologies. The document's central output was a non-endorsement of quantum key distribution (QKD) for government use. NCSC's quantum security whitepaper declined to recommend QKD as a replacement for conventional cryptographic controls, citing practical deployment limitations and the absence of implementation standards. It was a careful, technically conservative document. It contained no migration deadline and no Q-Day estimate.

The absence of deadlines in 2015-2016 is itself data. These bodies knew Shor's result and Fowler's estimate. They started the process. They did not yet have a date, because the hardware was far enough away that no responsible analyst was prepared to commit to one. That changed in 2019.

2019: Gidney-Ekerå and the 50-Fold Reduction

In May 2019, Craig Gidney at Google and Martin Ekerå at the KTH Royal Institute of Technology in Stockholm published a paper that restructured every risk model built on the Fowler et al. baseline. The title was direct: "How to factor 2048 bit RSA integers in 8 hours using 20 million noisy qubits." The paper is arXiv:1905.09749, later published in the Quantum journal in April 2021.

Twenty million is not one billion. That reduction (approximately 50-fold) did not happen because anyone found a flaw in Fowler's work. It happened because Gidney and Ekerå developed substantially better quantum arithmetic: carry-save techniques and windowed modular exponentiation that dramatically reduced circuit depth. Fewer gates mean fewer error correction cycles per computation. Fewer error correction cycles mean fewer physical qubits per logical qubit at the same error rate. The architectural assumptions remained the same: superconducting surface code, physical gate error rate of roughly 10^-3, one-microsecond cycle time, ten-microsecond reaction time. The circuit ran in 8 hours at a fifth of the previously estimated hardware requirement.

This is not a pedantic technical distinction. A billion-qubit machine was firmly in the realm of speculation. Twenty million qubits is still a machine that does not exist, but the distance between current hardware and the threat threshold has contracted by a factor of fifty. Risk timelines that looked comfortable in 2012 looked considerably less so in 2019. The Gidney-Ekerå figure became the new canonical baseline that NIST, NSA, NCSC, BSI and ANSSI all referenced in their post-2019 guidance.

The policy consequences followed. They did not follow immediately, but they followed.

The HNDL problem: why the deadline predates Q-Day

Before tracking the policy acceleration, one concept needs to be in place, because it explains why migration deadlines are set years before Q-Day rather than at Q-Day. HNDL (Harvest Now, Decrypt Later) is the practice of collecting encrypted network traffic today and storing it for decryption once a CRQC becomes available. Nation-state actors with the collection infrastructure and long-term analytical programmes to make this worthwhile are presumed to be doing it now. The NSA, NIST, CISA and NCSC have all endorsed this threat model; the Mosca Theorem formalises the mathematics. Data encrypted today with RSA-2048 or ECDH that requires confidentiality beyond the expected Q-Day range is at risk under HNDL whether or not a CRQC currently exists. This is why the migration deadline is not Q-Day. It is Q-Day minus the migration lead time.

September 2022: NSA CNSA 2.0

In September 2022, the NSA published CNSA 2.0 (PP-22-1338), the first explicit quantum-safe mandate from a US government body. The document moved from advisory to directive for National Security Systems. The deadlines: new NSS acquisitions must be CNSA 2.0-compliant from 1 January 2027; all NSS must be exclusively CNSA 2.0 by 2030; complete transition by 2035. CNSA 2.0 specified exact algorithm parameter sets: ML-KEM-1024 for key encapsulation, ML-DSA-87 for digital signatures, SLH-DSA-256s for signatures, AES-256, and SHA-384. This level of specificity was new. Previous guidance named algorithm families; CNSA 2.0 named exact parameter sets. For US defence contractors and national security system operators, the 2027 deadline for new acquisitions is now approximately twelve months away.

May 2022: White House NSM-10

A month before CNSA 2.0, in May 2022, the White House issued National Security Memorandum 10 (NSM-10), the first US presidential directive on quantum cryptographic risk. NSM-10 directed federal agencies to complete migration to quantum-resistant cryptography by 2035 and assigned lead responsibility to CISA and NIST. The memorandum also tasked agencies with producing cryptographic inventories, reflecting the reality that the migration problem cannot be scoped without first knowing what is actually deployed.

March 2022: ANSSI's first formal position

In March 2022, France's national cybersecurity agency, the Agence nationale de la sécurité des systèmes d'information (ANSSI), published its first formal post-quantum position paper. The ANSSI position is technically the most conservative of the major bodies, in a way that reflects considered caution rather than complacency: ANSSI mandates hybrid cryptography, meaning the simultaneous deployment of a classical algorithm and a post-quantum algorithm, with security guaranteed as long as either remains unbroken. Pure post-quantum-only deployment is not yet approved for ANSSI-certified sensitive applications. The rationale is that post-quantum algorithms are newer and have had less cryptanalytic scrutiny. Hybrid deployment hedges against both the quantum threat and the risk of a classical cryptanalytic break of a post-quantum scheme. The 2030 target for most sensitive applications was set in this document.

BSI TR-02102-1: the first concrete sunset date

Germany's Federal Office for Information Security, the BSI, had been updating its technical guideline TR-02102-1 annually. The March 2020 update (version 2020-01) included the first specific post-quantum-related sunset: RSA-2000 declared conformant only until end of 2023. BSI TR-02102-1 Version 2020-01 marked the beginning of a consistent annual update cycle that would eventually specify migration targets for all RSA and ECC key sizes. The BSI approach, with annual updates, explicit conformance windows, and algorithm-by-algorithm deprecation, became a model for how to operationalise migration guidance.

Each point represents a body issuing a migration deadline: X-axis is when the guidance was published, Y-axis is the deadline year stated. The diagonal reference line marks where "year published" equals "deadline year". Points above it have lead time; points close to it have very little. The NSA 2027 new-acquisitions deadline, issued in 2022, is now approximately twelve months away.

Sources: NSA CNSA 2.0 (September 2022) | NIST IR 8547 IPD (November 2024) | NCSC PQC Migration Timelines (March 2025) | BSI TR-02102-1 (Version 2026-01) | ANSSI Position Paper (March 2022)

August 2024: The Standards Are Available

The NIST post-quantum standardisation competition ran from 2016 to 2024: eight years. Security competitions of that duration are not unusual when the technical stakes are high and the cryptographic community insists on thorough scrutiny. Three successive rounds eliminated candidates with structural weaknesses, including some that fell in the later rounds in ways that surprised their proposers. The survivors are the ones available for deployment now.

On 13 August 2024, NIST published the final versions of its first three post-quantum standards, effective immediately. FIPS 203 (ML-KEM), FIPS 204 (ML-DSA), and FIPS 205 (SLH-DSA) are the operational standards. ML-KEM (the Module-Lattice Key Encapsulation Mechanism, derived from CRYSTALS-Kyber) is the primary replacement for RSA and ECDH in key exchange. ML-DSA, derived from CRYSTALS-Dilithium, is the primary replacement for RSA and ECDSA in digital signatures. SLH-DSA, derived from SPHINCS+, provides a hash-based signature alternative with different performance characteristics. A fourth standard, FN-DSA (FIPS 206, based on FALCON), ran concurrently and is expected to finalise in 2025. [INFERRED: NIST CSRC project page; NIST IR 8547 IPD November 2024 confirms FIPS 206 in progress.]

For anyone tracking the competition closely, August 2024 was the point at which "this will happen eventually" became "this is available now." The standards are published. Implementations exist. The question shifted from "when will the replacements be available" to "when will you have deployed them." Those are different questions with very different answers depending on the organisation.

In November 2024, NIST published NIST IR 8547 (Initial Public Draft), the deprecation schedule for classical algorithms. RSA-2048 and ECC P-256 deprecated after 2030 in federal systems (no new deployments). Disallowed after 2035. For organisations in scope of US federal requirements, which includes a substantial portion of the global defence supply chain and any company processing US federal government data, this is the compliance timeline. Not advisory. A schedule.

The NCSC followed in March 2025 with the UK's first formal three-phase migration timeline. NCSC's PQC migration timelines guidance set Phase 1 as discovery and planning to 2028, Phase 2 as high-priority upgrades from 2028 to 2031, and Phase 3 as complete migration from 2031 to 2035. This is NCSC's first guidance with explicit calendar anchors. For UK CNI operators and government systems, Phase 1 ends in two years. That is not a long planning horizon for estates of any complexity.

ANSSI published a follow-up position paper in October 2023, confirming the 2030 target for sensitive applications and noting that first ANSSI product security visas for hybrid PQC products were expected in 2024-2025. ANSSI's follow-up position paper maintained the hybrid mandate and added specificity on which algorithm combinations meet the requirement. The current version of BSI TR-02102-1, version 2026-01, recommends ML-KEM, ML-DSA, and SLH-DSA alongside FrodoKEM and Classic McEliece, with migration targets of 2030 for critical infrastructure and 2032 for all organisations.

The irony worth noting: just as the standards were finalised, the physical qubit estimate fell again.

May 2025: Gidney Again, and What the Trend Line Shows

In May 2025, Craig Gidney published a second paper: "How to factor 2048 bit RSA integers with less than a million noisy qubits." The preprint is arXiv:2505.15917. The estimate: approximately 1,409 logical qubits at peak, and 897,864 physical qubits. Gidney states both conservatively in the abstract ("fewer than one million physical qubits"), which is accurate. The runtime is 4.96 days, which he rounds to "less than a week." [VERIFIED: arXiv:2505.15917v1, Table 5, n=2048; accessed 2026-04-16]

For context on where theoretical estimates currently sit: in February 2026, Iceberg Quantum (February 2026) published a resource estimate claiming RSA-2048 factoring with fewer than 100,000 physical qubits, using quantum low-density parity check codes rather than surface codes. QLDPC codes require qubit connectivity that has not been demonstrated at scale in any physical hardware platform. This is not a successor to the Gidney surface-code trajectory. It is a theoretical lower bound under a different set of hardware assumptions, worth tracking, but not yet a basis for revising the timeline.

This is a further approximately 20-fold reduction from the 2019 Gidney-Ekerå estimate. The methods: approximate residue arithmetic, yoked surface codes, improved magic state cultivation. Each represents a genuine algorithmic advance, not a relaxation of the error assumptions. The underlying architectural parameters remain the same as 2019 (superconducting surface code, error rate approximately 10^-3, one-microsecond cycle time) and the runtime is now under one week. Combined with the 2019 reduction from Fowler, the total reduction from 2012 to 2025 is approximately 1,000-fold.

Three anchor points, thirteen years, three orders of magnitude:

| Year | Estimate (physical qubits) | Paper |

|---|---|---|

| 2012 | ~1,000,000,000 | Fowler, Stephens, Grover |

| 2019 | ~20,000,000 | Gidney and Ekerå |

| 2025 | <1,000,000 | Gidney |

The reduction across those three data points follows a log-linear pattern. When the physical qubit counts are expressed on a natural logarithm scale (20.72 in 2012, 16.81 in 2019, 13.71 in 2025) the decline is approximately linear at a rate of 0.54 per year. [INFERRED: log-linear OLS regression on three verified anchor points.]

What the trend line suggests about 2028 and 2030

A log-linear extrapolation of the three available data points (and it must be stated plainly that three points is not a large sample for a statistical projection) produces the following estimates for future algorithmic requirements:

- At 2028: approximately 202,000 physical qubits

- At 2030: approximately 70,000 physical qubits

The confidence interval is wide. If the rate of algorithmic improvement slows by 50%, the 2030 estimate rises to approximately 265,000. If the rate holds or accelerates, it falls further. [INFERRED: log-linear OLS extrapolation; full methodology available in the QSECDEF canonical dataset; three data points provide zero statistical degrees of freedom beyond the exact fit.] Neither bound represents a machine that currently exists. What the trend shows is that the threshold is not fixed and the direction of travel is clear.

The hardware side of the equation is the binding constraint, not the algorithmic side. Current superconducting quantum processors (IBM's Eagle and Heron series, Google's Willow) operate in the range of 100 to 1,000+ physical qubits, but with error rates and connectivity that are not suitable for fault-tolerant computation at the scale Shor's algorithm requires. IBM's published quantum roadmap targets approximately 100,000 physical qubits in the early 2030s. [INFERRED: IBM Quantum roadmap public statements; exact figures are IBM public claims, not independently verified here.] The algorithmic threshold under the log-linear trend approaches that figure around 2029-2030. Hardware availability is expected to approach it around 2033, under current roadmaps. The gap between the two curves is approximately 3-4 years, which is broadly consistent with the expert survey consensus.

What the expert consensus says

The Global Risk Institute's 2025 quantum threat timeline report, the most consistently tracked expert survey on this question, gives a 49% probability of a CRQC within ten years, meaning by approximately 2035, and a 34% probability within five years, by approximately 2030. The median expert estimate from that survey is 2029-2032. The full report is available from the Global Risk Institute.

The log-linear qubit reduction trend and the GRI survey consensus are measuring related but different things. The trend in qubit estimates measures algorithmic efficiency: how many physical components does the best known algorithm require, under stated error correction assumptions. Q-Day requires both algorithmic efficiency and hardware capability to converge in the hands of an adversary. Hardware scaling is the current bottleneck. The trend tells us that when the hardware is sufficient, the algorithmic problem is already much closer to solved than the 2012 baseline suggested. The GRI survey tells us what experts think about when the hardware will be sufficient. At a 34% probability of a CRQC by 2030, any organisation with data requiring confidentiality through that period has a decision to make right now.

The model caveats, stated clearly

Three data points are the entire empirical basis for the projection. The model has no statistical degrees of freedom; the regression line passes exactly through all three points by definition. It is directionally defensible as a representation of demonstrated improvement, and it is consistent with the GRI survey's median estimate, but it should not be treated as a precise engineering specification. Algorithmic improvement is not guaranteed to continue at this rate. The next major reduction could be larger, smaller, or not arrive for a decade. The projection assumes surface code error correction throughout; a different error correction paradigm would shift the estimate in either direction. All three data points come from papers in the public domain; classified research is not reflected here, and there is no reason to assume it would shift the estimate in the more comfortable direction.

Series A (red): GRI survey median Q-Day estimates by year of survey. Series B (green): the most urgent migration deadline in force at each point. The squeeze is the closing gap between them. For organisations with multi-year migration programmes, a gap of 3-4 years is insufficient. GRI 2022 and 2023 median figures are inferred from available GRI summary language; 2024 and 2025 are from published reports.

Sources: Global Risk Institute Quantum Threat Timeline 2025 | NSA CNSA 2.0 (2022) | NCSC Migration Timelines (2025) | NIST IR 8547 IPD (2024)

What This Means for Your Migration Planning

The Mosca Theorem applied to current numbers produces an uncomfortable result for many organisations. If your data needs to remain confidential for ten years from today (through to 2036) and your migration programme will take three to five years to complete, you need Q-Day to be at least thirteen to fifteen years away to have a comfortable margin. Under the GRI median estimate of 2029-2032, that margin is gone or closing. For data classified at national security level, or with lifetime sensitivity (genomic data, long-term legal records, government intelligence sources), the GRI lower bound of 34% probability by 2030 is not an acceptable residual risk under any credible risk management framework.

HNDL makes this worse. I have seen organisations take comfort in the fact that a CRQC does not yet exist and treat the migration as a future-dated project. That reasoning ignores the collection problem. Long-lived sensitive data encrypted in 2024 with RSA-2048 or ECDH P-256 is potentially at risk from any adversary who is collecting now and waiting. The collection has already happened, or is happening. The decryption is what awaits the hardware. The data that has been harvested cannot be un-harvested.

The regulatory picture has also moved. These are not advisory frameworks any longer:

- NSA CNSA 2.0: new National Security System acquisitions must be CNSA 2.0-compliant from 1 January 2027. For US national security contractors, that deadline is approximately twelve months away.

- NIST IR 8547: RSA-2048 deprecated for new federal systems from 2030, disallowed from 2035. For any organisation dependent on NIST-compliant implementations, the 2030 date is the new system procurement threshold.

- NCSC's three-phase roadmap: Phase 1 discovery and planning complete by 2028. For UK critical national infrastructure operators, that is a two-year window.

- BSI TR-02102-1 Version 2026-01: 2030 for critical infrastructure, 2032 for all organisations. Referenced in NIS2 implementing guidance. For European regulated sectors, this is quasi-mandatory.

The practical framing I use with security teams is a four-step sequence, and the sequence matters because skipping step one makes steps two through four meaningless:

First, the cryptographic inventory: what algorithms are deployed, in which systems, protecting which data, at what key lengths. You cannot prioritise what you cannot see. This is the hardest step operationally because most large organisations have algorithm deployment that is poorly documented, especially in legacy and embedded systems. The cryptographic inventory process is covered separately in the insights library.

Second, classify by risk: which data has a sensitivity window extending beyond 2030, and which systems carry it. Not everything in your estate is equally exposed. A public-facing web server running ECDH today has a different risk profile from an air-gapped archive system holding thirty-year classified records.

Third, prioritise high-risk systems for early migration. The mistake I see repeatedly is treating PQC migration as a uniform programme that has to complete simultaneously across the estate. It does not. A risk-stratified programme migrates the highest-exposure systems first and accepts longer timelines for systems that carry lower-sensitivity, shorter-lived data.

Fourth, begin with hybrid. The ANSSI and BSI joint recommendation is to deploy ML-KEM plus ECDH hybrid key exchange per the IETF draft hybrid design. This approach protects against both the quantum threat and the residual risk of a classical cryptanalytic break of a post-quantum scheme. Hybrid deployment is the technically conservative position and the one that both European bodies have made mandatory for their most sensitive applications. It is also the approach that gives you the most optionality if the post-quantum algorithm landscape shifts before your migration completes.

There is a second update to factor into that four-step framing, and it affects organisations that have already started their migration. In March 2026, a Google Quantum AI team (Babbush, Zalcman, Gidney et al., March 2026) published resource estimates for the 256-bit Elliptic Curve Discrete Logarithm Problem: the mathematical problem that underpins ECDSA and ECDH, which are the algorithms protecting most modern TLS sessions, code signing, SSH, and digital signatures. Their estimate: fewer than 500,000 physical qubits, with a runtime of approximately nine minutes after precomputation. That is roughly half the hardware cost of the RSA-2048 factoring estimate, and days faster in execution. Organisations that migrated from RSA to ECC thinking they had bought time have not reduced their quantum exposure. They have moved to an algorithm that falls at a lower hardware threshold and in minutes rather than days. The mitigation is unchanged: ML-KEM and ML-DSA address both RSA and ECC threats. But if your migration plan reads "move from RSA to ECC by 2026, then PQC by 2030", that plan no longer works. ECC is not an interim safe harbour. It is an interim step that arrives at the same destination sooner.

Scores are comparative (1 = low, 5 = high) and represent analytical judgements based on primary source documents. They are not outputs of a formal methodology. NSA and NIST are US bodies; BSI and ANSSI are European; NCSC is UK. ANSSI and BSI lead on hybrid mandate, reflecting the European belt-and-braces approach. NSA leads on enforceability and urgency signal.

Sources: NSA CNSA 2.0 (2022) | NIST IR 8547 IPD (2024) | NCSC Migration Timelines (2025) | BSI TR-02102-1 (Version 2026-01) | ANSSI Position Paper (2022) | ANSSI Follow-up (2023)

QSECDEF's Q-Day Timeline Risk Calculator and HNDL Risk Calculator are the practical tools for applying the Mosca Theorem to your specific data classification and migration timeline. The Q-Day Calculator takes your data sensitivity window and estimated migration duration and maps them to the GRI probability distribution. The HNDL Calculator assesses current exposure based on algorithm deployment. Neither substitutes for a structured migration programme, but both give you the quantified starting point for the prioritisation argument.

Where to Start

The 2030 deadline is not aspirational. It is binding for any organisation with NIS2 exposure in Europe, any US national security contractor purchasing new systems after January 2027, and any entity operating under BSI or ANSSI guidance for critical infrastructure. For organisations outside those regulatory perimeters, the GRI probability distribution provides the risk-based equivalent: a 34% chance of a CRQC by 2030 is the kind of probability that no competent risk function treats as background noise.

The tools below operationalise the Mosca Theorem for your specific situation. The Q-Day Timeline Risk Calculator takes your data sensitivity window and migration timeline and outputs the risk exposure under the GRI probability distribution. The HNDL Risk Calculator assesses your current exposure based on which algorithms are protecting which data today. Use them as the quantified starting point for the programme conversation, not as a substitute for it.

- Q-Day Timeline Risk Calculator: apply the Mosca Theorem to your specific data classification and migration timeline

- HNDL Risk Calculator: assess your current exposure to harvest-now-decrypt-later collection

If your results show high-priority exposure, the next step is a structured migration programme with defined milestones. The QSECDEF membership network connects you to practitioners who have done this in regulated and defence environments. View membership options or contact the team directly.